Connectivity Summary

An out of the box connector is available for <Hive Kerberos Connector> databases. It provides support for crawling database objects, profiling of sample data and lineage building.

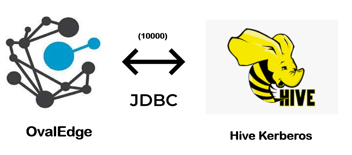

The connectivity to Hive Kerberos Connector is via JDBC driver, which is included in the platform.

The connector currently supports the following versions of SQL Server:

Edition: CDH

Version: 5.0, 6.0, 7.0

The drivers used by the connector are given below:

Driver / API: Hive JDBC uber jar

Version: 7.4

Details: org.apache.hive.jdbc.HiveDriver - Hive JDBC "uber" jar

Technical Specifications

The connector capabilities are shown below:

Crawling

| Feature | Supported Objects | Remarks |

| Crawling | Tables | |

| Table Columns |

Supported Data types: Supporting all types of Data types including nested data type arrays. |

Profiling

Please see Profiling Data for more details on profiling.

|

Feature |

Support |

Remarks |

|

Table Profiling |

Row count, Columns count, View sample data |

|

|

View Profiling |

Row count, Columns count, View sample data |

View is treated as a table for profiling purposes |

|

Column Profiling |

Min, Max, Null count, distinct, top 50 values |

|

|

Full Profiling |

Supported |

|

|

Sample Profiling |

Not Supported |

Lineage Building

|

Lineage entities |

Details |

|

Table lineage |

Supported |

|

Column lineage |

Supported |

|

Lineage Sources |

Stored procedures, functions, triggers, views, SQL queries (from Query Sheet), query logs and HQL files |

Querying

|

Operation |

Details |

|

Select |

Supported |

|

Insert |

Supported. |

|

Update |

Supported. |

|

Delete |

Supported. |

|

Joins within database |

Supported. |

|

Joins outside database |

Supported. |

|

Aggregations |

Supported |

|

Group By |

Supported |

|

Order By |

Supported |

By default the service account provided for the connector will be used for any query operations. If the service account has write privileges, then Insert / Update / Delete queries can be executed.

Pre-requisites

To use the connector, the following need to be available:

- Connection details as specified in the following section should be available.

- An admin / service account, for crawling and profiling. The minimum privileges required are:

|

Operation |

Access Permission |

|

Connection validate |

Yes |

|

Crawl schemas |

Yes |

|

Crawl tables |

Yes |

|

Profile schemas, tables |

Yes |

|

Query logs |

Yes |

|

Get views, procedures, function code |

Yes |

- JDBC driver is provided by default. In case it needs to be changed, add Hive drivers into the OvalEdge Jar path to communicate to SQL Server database.

Check the Configuration section for further details on how to add the drivers to the jar path.

- For Kerberos authentication need krb5.conf and ovaledge.keytab file

- Please find the below document for Krb5.conf file setup

https://docs.google.com/document/d/1VWOazfDAtoeyyDPU47o2Uws9QyvQaNeog1qX36sfrpY/edit

Connection Details

The following connection settings should be added for connecting to a Hive Kerberos database:

- Database Type: Hive

- Authentication: Kerberos Authentication

- Connection Name: Select a Connection name for the Hive Kerberos database. The name that you specify is a reference name to easily identify your Hive Kerberos database connection in OvalEdge. Example: Hive Kerberos

- Server: Hive Kerberos Server IP

- Port number: 10000

- Database: Name of the database to connect.

- Driver: org.apache.hive.jdbc.HiveDriver

- Driver Name: JDBC driver name for org.apache.hive.jdbc.HiveDriver. It will be auto-populated.

Example: org.apache.hive.jdbc.HiveDriver - Connection String: Hive Kerberos connection string. Set the Connection string toggle button to automatic, to get the details automatically from the credentials provided. Alternatively, you can manually enter the string.

Format: jdbc:hive2://{server}:{port}/{sid};principal=hive/undefined

Example: jdbc:hive2://18.220.154.229:10000/default;principal=hive/ec2-18-220-154-229.us-east-2.compute.amazonaws.com@US-EAST-2.COMPUTE.INTERNAL - Keytab: Provide the Key tab path

Once connectivity is established, additional configurations for Crawling and Profiling can be specified.